When students submit feedback and see no visible response, they draw a quiet conclusion: their input probably did not make a difference.

This is not necessarily because nothing changed. Some things may have changed or been improved. But without a clear signal connecting their feedback to that change, students have no way of knowing.

Response rates fall gradually. The pool of respondents narrows to the most engaged or the most frustrated. Institutions find themselves making decisions based on a sample that no longer reflects the full cohort.

The issue is not that institutions fail to act. It is that they rarely communicate the connection between student input and institutional response. That gap is where trust erodes.

Closing the loop is not about responding to every suggestion. It is about making the connection between feedback and action explicit and visible.

Students understand that not everything they raise can be addressed immediately. What builds trust is knowing their input was read, considered, and taken seriously. When that becomes consistent and expected, participation grows naturally and the evidence base strengthens with it.

The goal is a feedback culture where students believe their voice leads to change, because it does, and because they can see that it does.

Collect feedback at the right moments.

Timing matters more than volume. Short check-ins at key points in the student journey produce richer data than a single survey at the end of term. Consistent question structures across faculties make it possible to track change over time, which is precisely what accreditation bodies look for.

Turn responses into clear priorities.

Raw data is not insight. Group open-text comments into themes, assign clear ownership, and decide what gets acted on before anything moves forward.

Communicate the outcome, including what did not change.

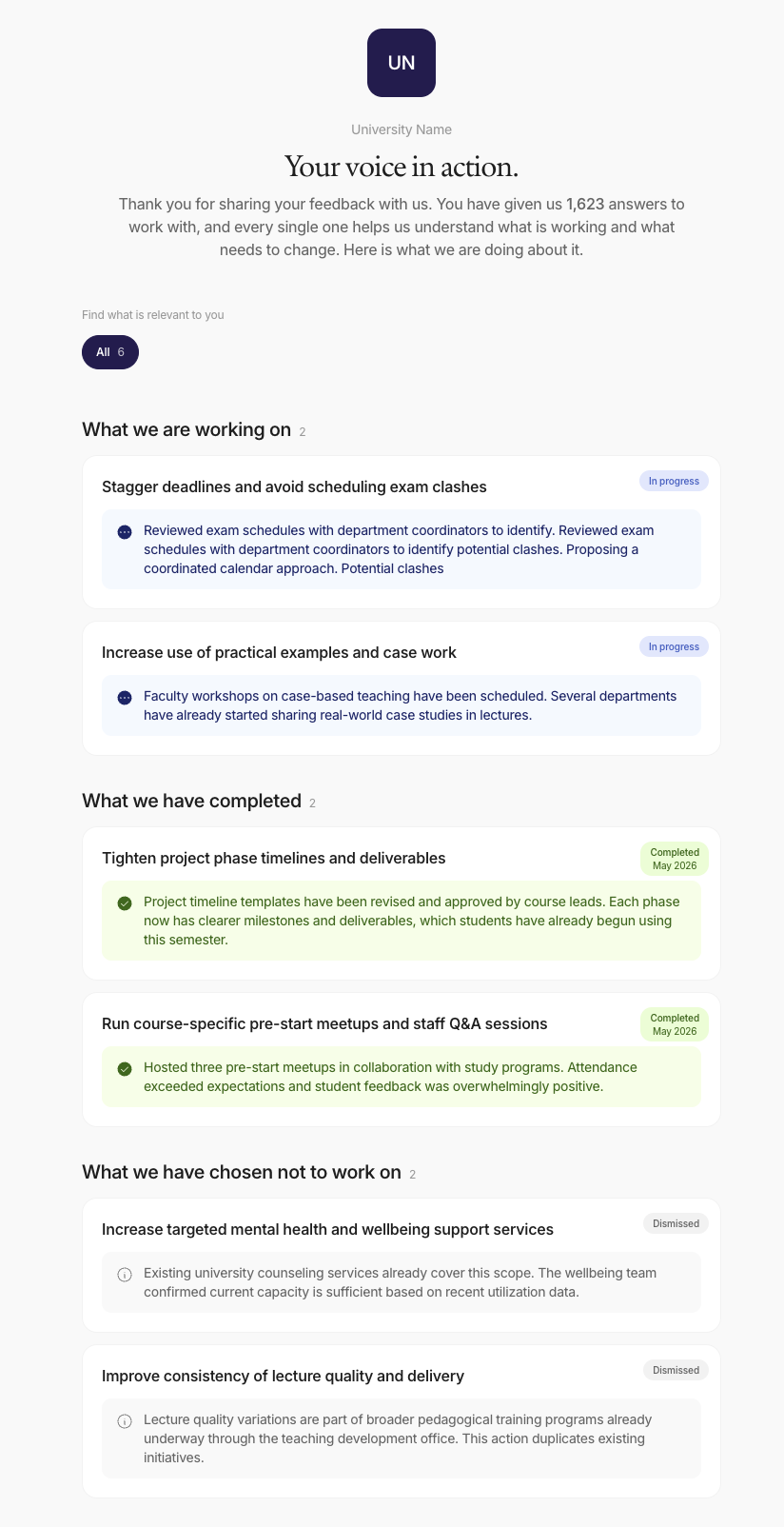

Structure your response around three things: what is being done, what has been considered but will not be taken forward and why, and what is planned for a future cycle. When announcing a change, link it explicitly back to the feedback that prompted it. Not as a formality, but as a concrete demonstration that the process works.

Students respond well to honesty about constraints. What is harder to accept is hearing nothing at all.

Every documented action taken in response to feedback becomes part of a continuous improvement trail. Over time, this record is one of your most valuable quality assurance assets: longitudinal evidence of feedback received, decisions made, and changes implemented across multiple cycles.

Closing the student feedback loop makes accreditation submissions more straightforward, because the evidence is already built into the process.

And when students see that their feedback consistently leads to a visible response, response rates stay high, the sample stays representative, and the quality of your evidence improves with each cycle.

Understanding the case for closing the loop is one thing. Operationalising it across programmes, faculties, and cycles is another.

This is something our customers told us directly. They could see the themes in the AI report. They knew what needed to change. But tracking what happened next required a separate system, a spreadsheet, or a follow-up meeting that was easy to skip. The loop was not closing because the tools to close it were not in the same place as the insight.

We built this feature in response to that feedback. It turns student feedback into a concrete to-do list: AI-generated, evidence-backed, and trackable through to completion.

Here is how it works.

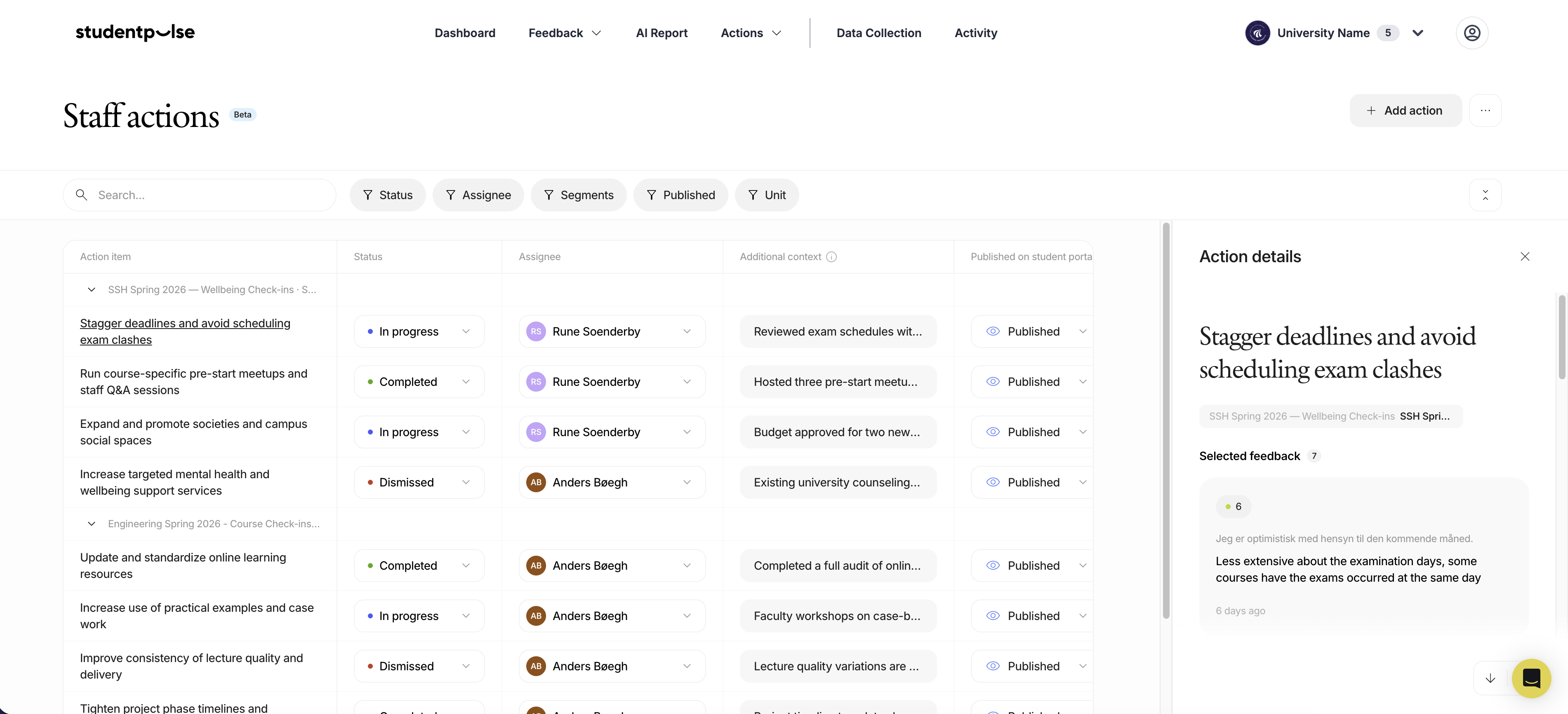

When a check-in closes, StudentPulse analyses the responses and generates a list of recommended actions. Each action is written as a concrete next step, scoped to the cohort it came from. Crucially, every action links back to the specific student comments that triggered it. Staff can read the evidence behind each recommendation directly in the platform. There is no black-box AI. Every suggestion is grounded in what students actually said.

From there, staff triage the list. They can assign an owner, set a status (In progress, Completed, or Dismissed), and add resolution notes where needed. When a status is set, the action is automatically published to the Student Portal, so students can see their input being acted on.

That is the loop closing in practice. Not in a separate spreadsheet. Not in a follow-up email chain. Inside the same platform where the feedback came in.

For study counsellors and programme coordinators, Institution Actions gives a prioritised list of what to address. For heads of study and deans, it provides visibility into what staff are actually doing about feedback. For students, it makes follow-through visible, which builds the trust that keeps response rates high over time.

The improvement trail builds automatically. Every action taken, every suggestion set aside and explained, is recorded and ready for reporting, accreditation, or programme review. No separate effort required.

We have built a practical checklist to help Institutions move from principle to process. It covers every phase: journey mapping and data collection, theme analysis and ownership assignment, communicating outcomes to students, and building your improvement trail. Designed to be taken back to your team and used. Download it for free.

If you want to see how StudentPulse can support this at scale: Book a demo

What does "closing the student feedback loop" mean in higher education?

It means communicating back to students after their feedback has been collected, explaining what changed, what did not, and why. It is the step that connects data collection to visible action, and it is what keeps students willing to participate in future surveys.

Why do student survey response rates drop over time?

The most common reason is that students do not see a connection between their feedback and any change. When participation feels pointless, engagement falls. Closing the loop, even briefly, restores that connection and protects response rate quality.

How does closing the feedback loop support quality assurance and accreditation?

Each time you document what changed in response to student feedback, you build longitudinal evidence of continuous improvement. Over multiple cycles, this becomes a structured improvement trail that accreditation bodies expect to see.

What is a "You Said, We Did" approach in student feedback?

It is a structured communication practice where institutions explicitly link student feedback to the actions taken. For example: "You told us lecture recordings were inconsistent. We have now standardised the process across all programmes." It is simple, direct, and builds the kind of trust that sustains participation.

How does StudentPulse support the "You Said, We Did" approach?

StudentPulse generates an AI report automatically when a check-in closes. Institution Actions, the new feature now live in the platform, analyses student responses and produces a list of recommended actions. Each action links back to the specific student comments that triggered it, so staff can verify the reasoning. Staff assign owners, set a status, and add resolution notes. When a status is set, the action is published to the Student Portal so students can see the outcome. The improvement trail builds as part of the process, ready for reporting and accreditation.